By Zeljko Bavcevic

Geovis Class Project @RyersonGeo, SA8905, 2018

- Introduction

The purpose of this series of posts is to serve as a record of my work on the SA8905 Geovisualization project. The broad aim of this project is to explore the complex relationship between language and geography, and each serves as a mediating factor on the other. I have long been fascinated by how geography has an impact on language and on populations, borders and culture by proxy. Specifically, I was hoping to understand how changes in the traversability of landscapes would impact migrations of people and thus impact language.

Over the course of research phase of this project, I realized that conducting the project on all of the languages of the world would require a large amount of data collection and cleaning, such that was considerably beyond the temporal scope of a single class. As such, I narrowed my goal to examining only the geography of language in the Balkan Peninsula, and how they related to one another in terms of linguistic diversity, lexical distance and speaker population.

- Research

The first stage in this process required finding data to operationalize my target variables. This proved more difficult than I had first expected for a number of reasons. Firstly, there is no single, global set of agreed upon variables for understanding language. Instead there are a number of competing variable sets each maintained by different organizations (with very different incentives).

Eventually I narrowed my focus on three primary linguistic variables for visualization, these are:

- Speaker Population: The number of individuals in the world estimated to speak a certain language. The value is largely based on a projection.

- Lexical Distance: A linguistic variable measuring the conceptual distance between languages by comparing each along a number of criteria such as common words, verb formations and other comparative measures.

- Linguistic Diversity: An index score that measures the different types of languages, dialects or variations spoken within the regions of the primary language.

- Data:

The data for these variables are generated and maintained by two primary organizations, SIL International and Unesco. SIL Interational compiles data on a number of relevant linguistic variables for sale to organizations. Unesco on the other hand is an international non-profit organization. The two data sets are very different in their methodologies and as such cannot be combined or used in conjunction. For this purpose, I elected to only use one of the data sets. Although the Unesco data was free, the format it was kept in would have required a laborious process of cleaning and transformation before it could easily be used in my model. As such, I reached out to SIL international for a quote and acquired the data I needed. It must be noted, for the purposes of transparency, that SIL is a Christian organization and there have been several concerns about its methodology and incentive structure. To assess the impact of thee on my outcome, I did a brief comparison between a sub-sample of the UNESCO and SIL data sets and was satisfied that it was within acceptable parameters.

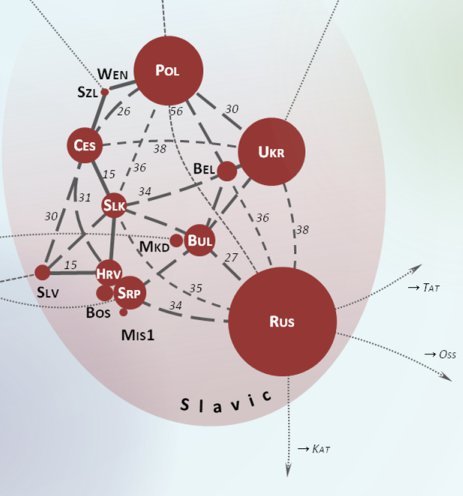

During my research, I had found a number of illustrations of lexical distance. Most often these would take the form of node or network charts depicting the different languages (Figure 1).

Figure 1: Lexical Distance Network

However, these were all static, non-spatial and often did not take into account other relevant linguistic variables such as linguistic diversity within a language class. As such, I wanted my own visualization to be dynamic, interactive, spatial and containing other relevant linguistic variables. To this end I needed to find a technology or platform that would allow me sufficient customizability and interactions. Inspired by the network or node maps I had consistently seen throughout my research phase, I knew that I wanted to build on this concept, adding a spatial and interactive component.

- Technology

I considered a number of options, the first and most obvious was using Python to code a network map using the Gephi platform. While pure coding would offer the most freedom and customizability, hosting the various tools I needed would prove very tedious and costly. As such, I set out in search of a hosted node or network analysis platform. After considerable research into a number of possible candidates, I opted for kumu.io.

Kumu was selected because it allowed me the freedom of coding most of my map to my specifications (On top of having a very user friendly UI), while also hosting all of my data and tools natively. This reduced the technical “surface area” of the project, which reduces opportunities for code breaking bugs and cross platform communications errors. Paying the modest membership fee, I began adding my data to Kumu.

- Execution

The first stage of development was loading my SIL data into Kumu. This was made easy using Kumu’s data cleaning tool. This allows the user to make sure all the input data meets kumu’s formatting requirements and even allows the user to dynamically change spreadsheet documents before upload.

Kumu’s Upload Wizard

After this was complete I created a bi-directional connection between each language (or element in network analysis parlance). This resulted in an ugly and incomprehensible visual bundle of connections. The next stage of the process would be coding the various variable symbolizes, interface options (adding a search, zoom and selection toolbar).

Kumu’s Advanced Code Based Editor

This was done using Kumu’s advance coding editor and I encountered no issues during this stage. However, when I attempted to add the polygon of the various countries of the Balkan Peninsula, the map visualization would simply vanish and I was not able to trouble shoot this with any success. As such, I had to ultimately abandon the spatial component of the project due to the constraints of time. I was still very satisfied with the resulting output.

- Challenges

The Final Output

A number of challenges were encountered during the course of this project. The primary issue was that the geographic overlay failed to load. My every attempt to fix this was unsuccessful and ultimately this radically undermined completing the project as I had conceived it at the design stage. Nonetheless, I still believe that the other elements of the project still satisfied the project requirements of producing a novel and interesting geovisualization.