by Alexander Pardy

Geovis Class Project @RyersonGeo, SA8905, Fall 2017

Data and Data Cleaning:

To obtain my data I used https://moviemaps.org/ and selected Toronto the website displays a map that shows locations in the Greater Toronto Area where movies and television shows were filmed. The point locations are overlaid on top of Google Maps imagery.

If you use the inspect element tool in internet explorer, you can find a single line of JavaScript code within the map section of the webpage that contains the latitude and longitude of every single point.

The data is in a similar format to python code. The entire line of JavaScript code was inputted into a Python script. The python script writes the data into a CSV file that can then easily be opened in Microsoft Excel. Once the file was opened in Excel, Google was used to search for the setting of each and every single movie or television show, using the results of various different websites such as fan websites, IMDB, or Wikipedia. Some locations take place in fictional towns and cities, in this case locations were approximated using best judgement to find a similar location to the setting. All the information was than saved into a CSV file. Python was then used to delete out any duplicates in the CSV file and was used to give a count of each unique location value. This gives the total number of movies and television shows filmed at each different geographical location. The file was than saved out of python back into a CSV file. The latitude and longitude coordinates for each location was than obtained from Google and inputted into the CSV file. An example is shown below.

Geospatial Work:

The CSV file was inputted into QGIS as a delimited text layer with the coordinate system WGS 84. The points were than symbolized using a graduated class method based on a classified count of the number of movies or television shows filmed in Toronto. A world country administrative shape file was obtained from the Database of Global Administrative Areas (GADM). There was a slight issue with this shapefile, the shapefile had too much data and every little island on the planet was represented in this shapefile. Since we are working at a global scale the shapefile contained too much detail for the scope of this project.

Using WGS 84 the coordinate system positions the middle of the map at the prime meridian and the equator. Since a majority of the films and television shows are based in North America, a custom world projection was created. This was accomplished in QGIS by going into Settings, Custom CRS, and selecting World Robinson projection. The parameters of this projection was then changed to change the longitude instead of being the prime meridian at 0 degrees, it was changed to -75 degrees to better center North America in the middle of the map. An issue came up after completing this is that a shapefile cannot be wrapped around a projection in QGIS.

After researching how to fix this, it was found that it can be accomplished by deleting out the area where the wrap around occurs. This can be accomplished by deleting the endpoints of where the occurrence happens. This is done by creating a text file that says:

This text box defines the corners of a polygon we wish to create in QGIS. A layer can now be created from the delimited text file, using custom delimiters set to semi colon and well-known text. It creates a polygon on our map, which is a very small polygon that looks like a line. Then by going into Vector, Geoprocessing Tools, Difference and selecting the input layer as the countries layer and the difference layer as the polygon that was created. Once done it gives a new country layer with a very thin part of the map deleted out (this is where the wrap around occurred). Now the map wraps around fine and is not stretched out. There is still a slight problem in Antarctica so it was selected and taken out of the map.

Styling:

The shapefile background was made grey with white hairlines to separate the countries. The count and size of the locations was kept the same. The locations were made 60% transparent. Since there was not a lot of different cities the symbols were classified to be in 62 classes, therefore each time the number increased, the size of the point would increase. The map is now complete. A second map was added in the print composer section to show a zoomed in section of North America. Labels and lines were then added into the map using Illustrator.

Story Map:

I felt that after the map was made a visualization should also be created to help covey the map that was created by being able to tell a story of the different settings of films and television shows that were filmed in Toronto. I created a ESRI story map that can be found Here .

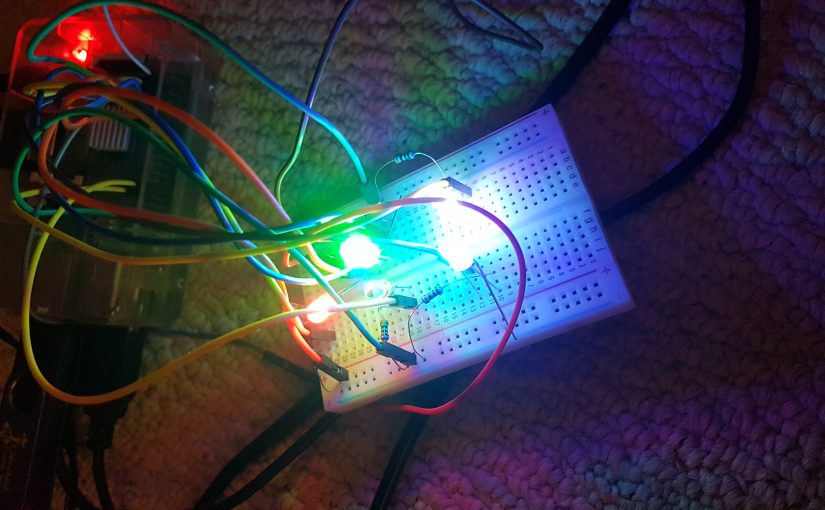

The Story Map shows 45 points on a world map, these are all based on the setting of television shows and movies that were filmed in the City of Toronto. The points on the map are colour coded. Red point locations had 4-63 movie and television shows set around the points. Blue point locations had 2-3 movie and television shows set around the points. Green point locations had 1 movie or television show set around the point. When you click on a point it brings you to a closer view of the city the point is located in. It also brings up a description that tells you the name of the place you are viewing and the number of movies and television shows whose settings takes place in that location. You also have the option to play a selected movie or television show trailer from YouTube in the story map to give you an idea of what was filmed in Toronto but is conveyed by the media industry to be somewhere else.